How we work

Design. Build. Transform.

As an end-to-end partner our disciplines span the full journey - from designing strategies to building scalable technology. Cross-disciplinary collaboration. Fast validation of value. Tailored to your needs.

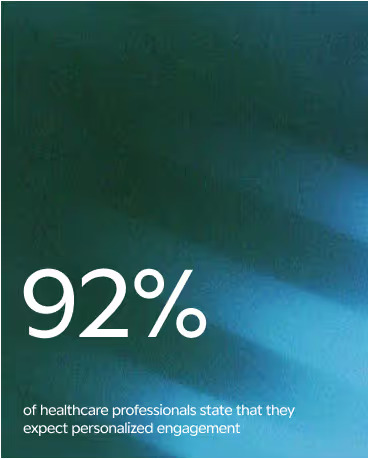

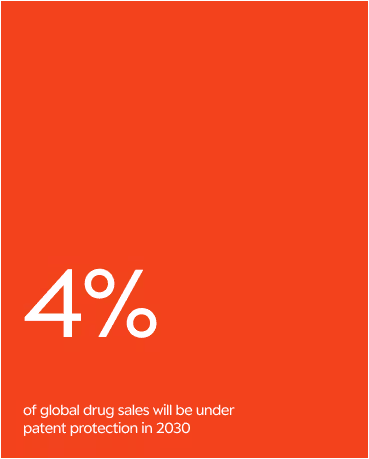

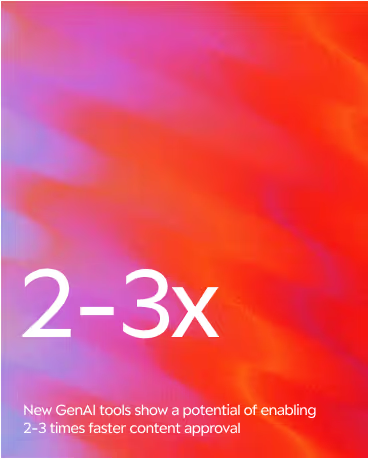

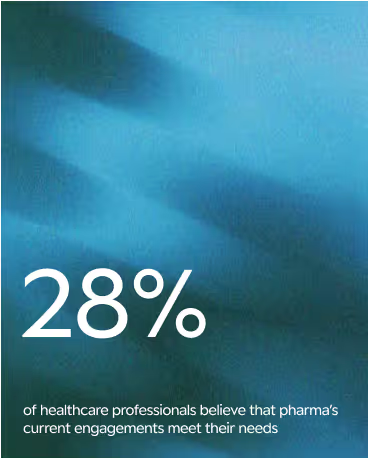

SOME OF the OPPORTUNITIES we care about right now

We go deep where the challenges are complex and the potential for progress is high.

Below are some of the opportunity spaces that guide how we think, collaborate, design

and build right now.

Our disciplines reflect how we work - blending strategy, data, and technology to solve real-world challenges. Flexible and collaborative, we're not bound to any single technology or framework. Below are examples of how we can deliver end-to-end solutions tailored to your goals.

Setting the direction

From actionable data and AI strategies to data architecture designs and omnichannel orchestration, we help organizations set a clear course for lasting impact.

Making it real

From advanced AI/ML models and scalable enterprise data platforms to predictive analytics tools and decision-support applications, we help our partners turn strategy into working solutions.

Bringing it to life

From operating model redesigns and governance frameworks to capability-building programs and adoption roadmaps, we help our partners in making change last.

Cases we're proud of

Whether the challenge is improving customer experience, streamlining the patient journey, unlocking the power of data, or navigating complex regulations - we’re ready to tackle it with you and turn ambition into impact.

Preparing for the Patent Cliff

Explore how we helped a global pharma organization move from manual, one-off LoE analyses to a scalable, drivers-based ML forecasting engine

GenAI Media Assistant

Learn how we helped a pharma company build a global Paid Media data foundation, enabling real-time campaign decisions powered by GenAI.

Trusted by organizations

pioneering health

Intellishore is a proven partner in turning data and AI transformation projects into real, tangible outcomes.

Insights

From strategy to technology, we’re continuously exploring and learning what can make a real difference in the health ecosystem -not just in systems and structures, but also for the people working every day to create better health journeys.