Snowflake Data Platform

Snowflake is a data platform built for the cloud. It consolidates data warehousing, data marts and data lakes into a single platform in order to make all data available to all business users. The architecture consists of three layers:

- Database storage: Cloud storage allows organizations to store all data in a scalable and inexpensive manner. Data is stored in its native format without the need for time-consuming transformations.

- Processing: Virtual warehouses facilitate compute resources that execute data-processing tasks required for queries.

- Cloud services: Coordinates the entire system and manages security, optimization and metadata.

Using Snowflake, you can effectively reinvent data warehousing and eliminate complexity related to integrating different data sources and types.

Why is it Snowflake cannot be ignored?

Why is it Snowflake cannot be ignored?

“Why should you pay for something not currently in use? Why should you manually relate to scalability? Why should there be downtime relating to scalability and database changes? These are some of the things I admire Snowflake for solving. You shouldn’t have to analyze and guess the number of CPUs you need at 8:00 in the morning or 18:00 in the evening.

Less-Than-1-Second Redeployment Time with Snowflake

Other technologies provide something similar, but they fall short since the redeployment time for compute is often more than 1 minute. I don’t know how Snowflake has managed to solve this, but I think a lot of people are jealous of their less-than-1-second redeployment time.

Snowflake Zero-Copy Clone

To me, Zero-copy clone is a unique way of testing for corrections and alterations in a 1:1 setup such as production. I have seen a lot of heavy solutions on how to synchronize development, test and production setups in your database, but I have not previously seen a well-working solution. Zero-copy clone provides exactly that. It takes a meta-data copy of e.g. a production database for a test environment in 1 second. Since it only copies meta-data and not actual data, the cost of this is next to nothing.”

Amit Luthra, Founding Partner at Intellishore

And don't worry, we will not use your information to spam you with buzz-filled articles on AI.

We look forward to hearing from you.

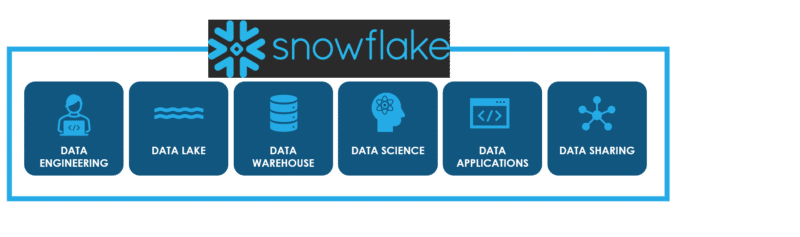

Snowflake supports a multitude of workloads…

Snowflake’s patented multi-cluster shared data architecture delivers a platform that enables many different workloads. These workloads include data warehouses, data lakes, data pipelines and data exchanges. They also include many types of business intelligence, data science and data analytics applications.

… and different data types and sources

The agnostic nature of Snowflake supports the handling and optimization of both structured and semi-structured data. The latter include the likes of JSON, Avro and XML. The platform includes standard-based connectors such as ODBC, JDBC, Javascript, Python, Spark, R, and Node.js. As a result, developers are granted full access to all tools, languages or frameworks they might need.

Furthermore, Snowflake Data Marketplace allows you to discover new data sets and services.

Global Sharing Across Providers

Global Sharing Across Providers

In an effect to help mitigate data silos within both large and small organizations, Snowflake allows for global sharing of data. When requested, this happens instantaneously without anyone having to copy or move data.

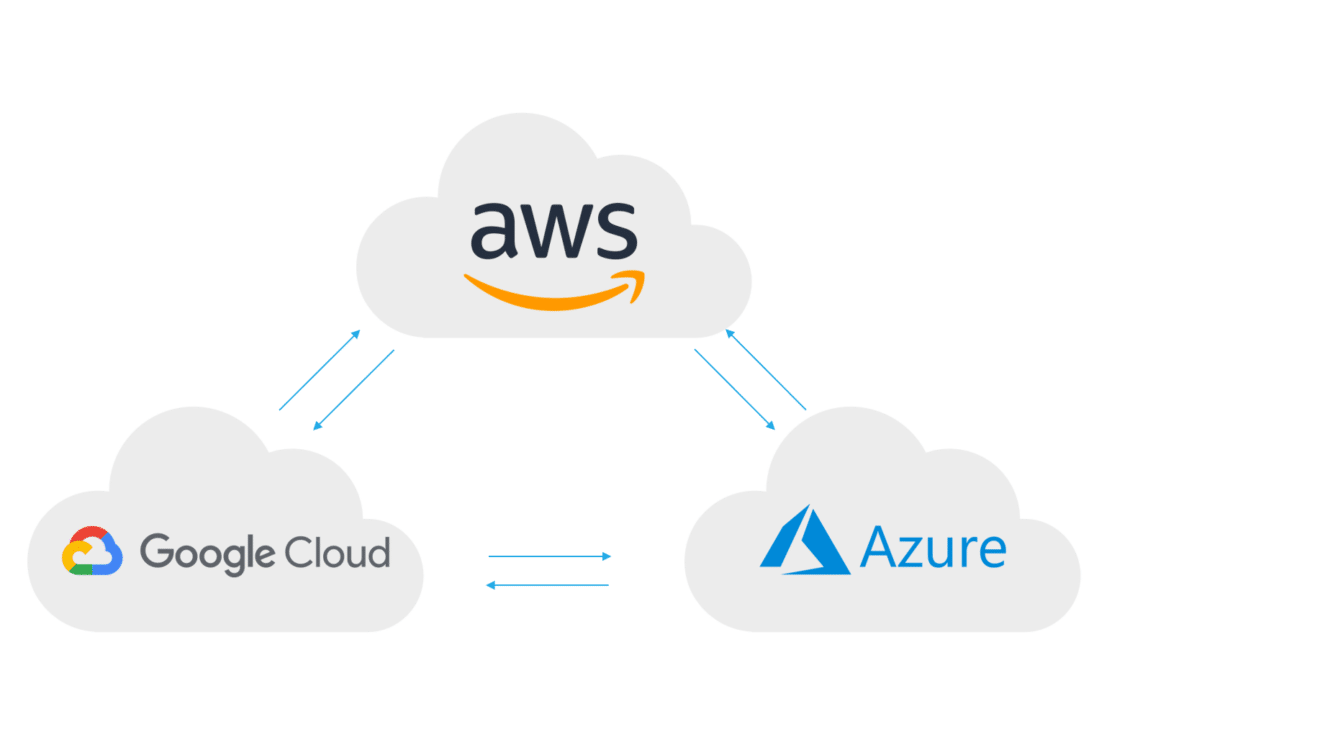

Cloud Agnostic Platform

The platform is also cloud agnostic. Not only does Snowflake have the ability to distribute data across regions, it can also distribute data across different cloud providers including AWS, Google Cloud, and Microsoft Azure.

This effectively allows large organizations to break down data-silos and obtain unified insights from an all-encompassing data platform.

One platform that powers the data cloud

One platform that powers the data cloud

“Snowflake is the first native data platform that has been built for the cloud. Our unique architecture offers our customers a true cross-cloud and multi-cloud approach across any geography. It also enables them to share data in a fast, secure, and seamless manner that was not previously possible.

Enrich your data with Snowflake

Snowflake customers can truly democratize their data and share it both with internal business units, but also externally with their wider ecosystem. Customers can instantly reach organizations in the Data Cloud through Snowflake Marketplace and can enrich their own data with access to more than 1,500 live and ready-to-query data sets from over 300 third-party data providers and data service providers.

In addition to mobilizing and sharing data, organizations can build applications directly on Snowflake’s platform and monetize these applications on Snowflake Marketplace to share more widely and with the highest level of security.

Customers’ Needs First

We enable our customers to work with data in an entirely new way and for that, we need partners who are innovative, forward-thinking, and always put the customers’ needs first. That is why our partnership with Intellishore has been extremely successful, as they have been able to help us unlock new ways to drive value for joint-customers by offering modern, best-of-breed data solutions.”

Christian Lindtorp Andersen, Country Manager at Snowflake Denmark

True Software as a Service Solution

Snowflake operates as a true software and as a service solution. Through its fully managed service layer handling user sessions, resources, enforcing security measures, compiling queries, enabling data governance and by ensuring atomicity, consistency, isolation, and durability. Read more about Snowflake here.

With the Snowflake you get technnology that is fully automatic and capable of handling and servicing the infrastructure. Effectively, this allows organizations to focus on analyzing and gaining insights from data, rather than spending resources maintaining the data platform.